I was in a room not long ago with a leadership team that had just “successfully” deployed an AI solution.

On paper, everything looked right. The model was accurate, the demo impressed stakeholders, and the vendor promised scalability. Then production hit.

Latency spiked, costs exploded, responses became inconsistent, and no one could explain why. Within six weeks, the system was quietly rolled back.

The problem wasn’t the AI. The model itself was fine.

The problem was that there was no enterprise-grade architecture underneath it.

This is the pattern I continue to see as a Vice President of Delivery working with enterprise clients. AI is being treated like a feature when it is actually an entire system.

In 2026, the companies that are succeeding with AI are not the ones with the “best models.” They are the ones who architect AI like they would any other critical enterprise platform.

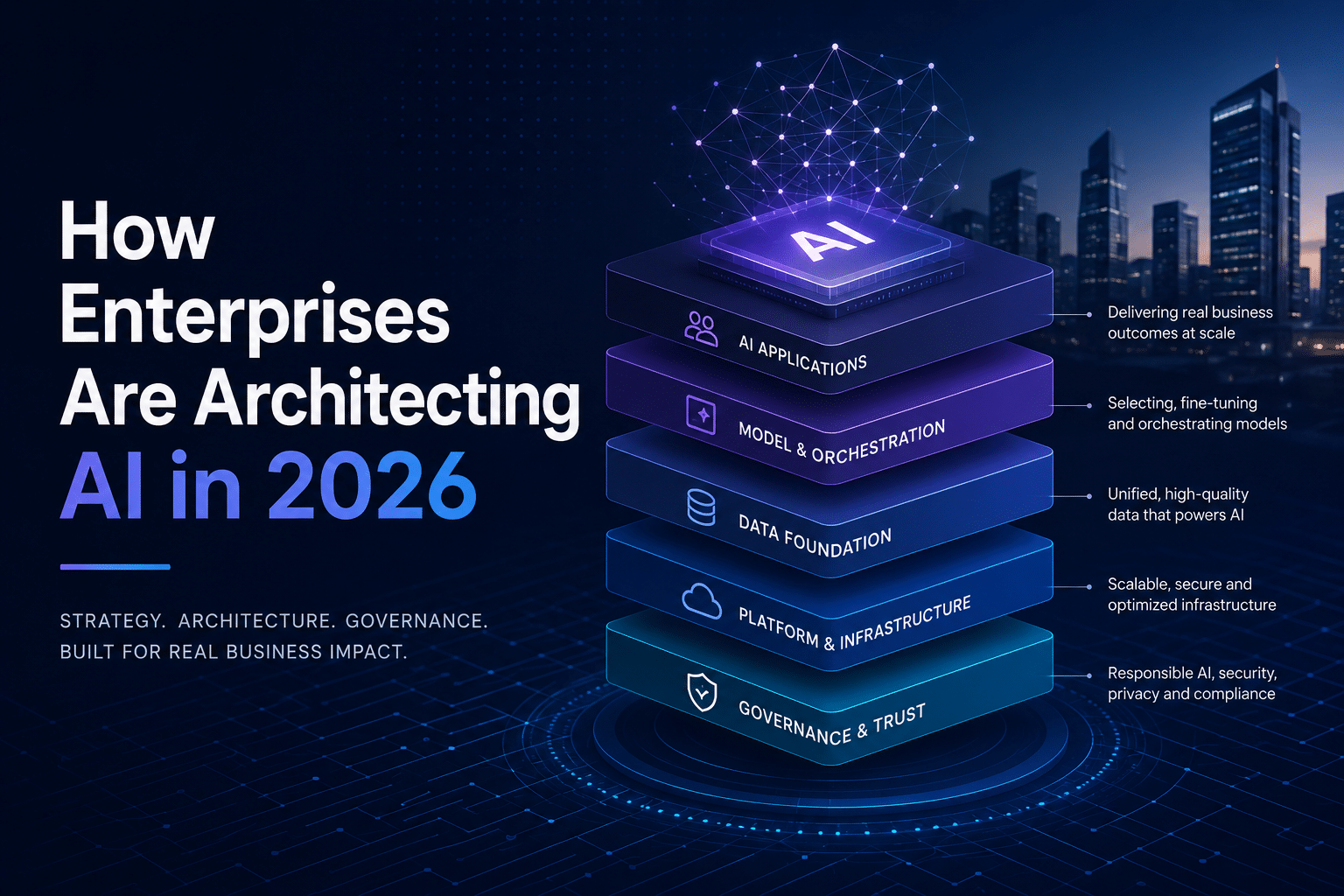

The Enterprise AI Architecture Stack

At a high level, every enterprise AI system that actually works at scale is built on four layers: data, model, orchestration, and governance.

The data layer is the foundation. If your data is fragmented, stale, or poorly structured, everything downstream becomes unreliable.

The model layer is what most people focus on, but it is only one piece. Even the best model cannot compensate for poor input data or poor system design.

The orchestration layer is where things get real. This is how requests flow, how services interact, and how logic is applied across multiple systems.

Skip this layer, and you end up with brittle, hard-coded integrations that break under load.

The governance layer is what keeps everything under control. It defines who can access what, how outputs are validated, and how risk is managed.

When enterprises skip governance, they don’t just create technical problems. They create business and legal exposure.

If you’re interested in how we approach structuring these systems, we outline this in our enterprise delivery methodology at Seisan.

Public LLMs vs Private Models vs Hybrid

There are three real architectural patterns I see in the field right now.

The first is public LLMs. These are easy to implement and well-suited for general-purpose use cases such as summarization, internal knowledge queries, and support automation.

The tradeoff is control. You are relying on external systems, and data sensitivity becomes a real concern.

The second is private models. These are trained or fine-tuned on internal data and deployed within a controlled environment.

They offer greater control and security, but come with higher costs and greater operational complexity.

The third, and most common in 2026, is hybrid.

In a hybrid model, enterprises use public LLMs for general-purpose tasks while layering private models or retrieval systems on top of them for proprietary data.

This is where we see the most success because it balances speed, cost, and control.

We’ve implemented this pattern in multiple client environments, often tying it into internal knowledge systems.

The Inference Architecture Problem

This is where most AI initiatives fail.

Proofs of concept run in isolation. Production systems deal with real users, real concurrency, and real expectations.

Suddenly, you are handling thousands of requests, each triggering expensive model calls with unpredictable latency.

Costs begin to scale linearly with usage, and performance becomes inconsistent.

This is where inference architecture matters.

We start introducing caching layers to avoid redundant model calls. If the same question is asked repeatedly, the system should not recompute the answer every time.

Batching becomes critical for efficiency, especially in high-throughput environments.

Edge deployment is another strategy, particularly for latency-sensitive applications. Moving inference closer to the user can dramatically improve response times.

Cloud providers have begun formalizing these patterns in their own guidance, such as Microsoft Azure’s AI architecture recommendations and best practices. The enterprises that solve this problem early are the ones that avoid the “AI works in demo but fails in production” trap.

Data Pipeline Architecture

If the model is the brain, the data pipeline is the bloodstream.

Data flows from ingestion to transformation to storage, then into training or retrieval systems, and finally into inference.

Break any part of that chain, and you introduce risk.

I worked with a client where the model performance started degrading over time. No one could explain it.

After digging in, we found that a downstream data transformation had slightly changed the field format. That change propagated silently through the pipeline, causing model drift.

It took weeks to identify because there was no visibility into the pipeline.

This is why observability and data lineage matter just as much as the model itself.

We go deeper into this in our work around enterprise data platforms and AI integration at Seisan, particularly when aligning AI systems with existing data ecosystems.

Security and Compliance by Design

One of the biggest mistakes I see is treating security as a post-implementation step.

That approach does not work with AI.

AI systems touch sensitive data, generate unpredictable outputs, and often integrate across multiple systems.

You need to think about access control, data masking, auditability, and model behavior from day one.

This becomes even more critical in regulated industries like finance and healthcare.

Frameworks like the National Institute of Standards and Technology AI Risk Management Framework provide a solid foundation for thinking about risk in these systems.

If you wait until after deployment to address these concerns, you are not just adding friction. You are introducing real exposure.

Cost Architecture (The Part Nobody Plans For)

AI costs surprise almost everyone.

What looks inexpensive at a small scale can become a major line item as usage grows.

The biggest driver is inference cost. Every request has a price, and those requests add up quickly.

We worked with a client whose projected AI costs exceeded expectations by over 3x in the first quarter.

The fix wasn’t to remove AI. It was to architect it properly.

We introduced caching, optimized prompt design, and routed lower-value requests to cheaper models.

The result was a significant cost reduction without sacrificing functionality.

Cost architecture is not about cutting corners. It is about making intentional decisions about where and how AI is used.

Building AI That Actually Scales

There are a few principles that consistently separate successful AI systems from failed ones.

Design for scale from day one. Retrofitting architecture later is always more expensive.

Separate concerns across data, model, orchestration, and governance.

Monitor everything, from data inputs to model outputs to system performance.

Plan for cost as a core part of the architecture, not an afterthought.

This is the approach we take when building enterprise AI systems at Seisan, where the focus is not just on deploying AI, but on making it sustainable.

Architecture for the Long Game

Pilot projects are easy.

Production AI at enterprise scale is not.

The difference is architecture.

The companies that are winning with AI in 2026 are the ones that treat it as a system, not a feature.

If you are thinking about how to move from experimentation to production, that is exactly where we help organizations build the right foundation from day one.

Architecture for the Long Game

Pilot projects are easy. Production AI at enterprise scale is not.

The difference is architecture. The companies winning with AI in 2026 treat it as a system, not a feature.

At Seisan, we’ve built enterprise AI systems that actually work under production load. We architect for scale, security, and cost control from day one, not as afterthoughts when things break.

If you’re moving from experimentation to production, or if your current AI deployment isn’t performing as expected, let’s talk. We help organizations build the right foundation before problems become expensive.

Contact us to discuss your enterprise AI architecture needs.