A mid-sized company I worked with recently started exactly where most businesses do, with a simple API call to OpenAI. Within weeks, they had AI embedded into customer support, internal tools, and reporting workflows. It was fast, effective, and honestly… a little addictive.

Then the bill hit $50,000 a month.

At the same time, their security team raised concerns about sensitive customer data leaving their environment. Suddenly, what felt like an easy win turned into a strategic problem.

So they did what many companies consider next: “Let’s just run AI locally.”

What they found was sobering. GPUs were expensive, deployment was complex, and maintaining models required expertise they didn’t yet have. The answer wasn’t obvious anymore.

That’s the reality of AI deployment today. There is no one-size-fits-all answer, only tradeoffs.

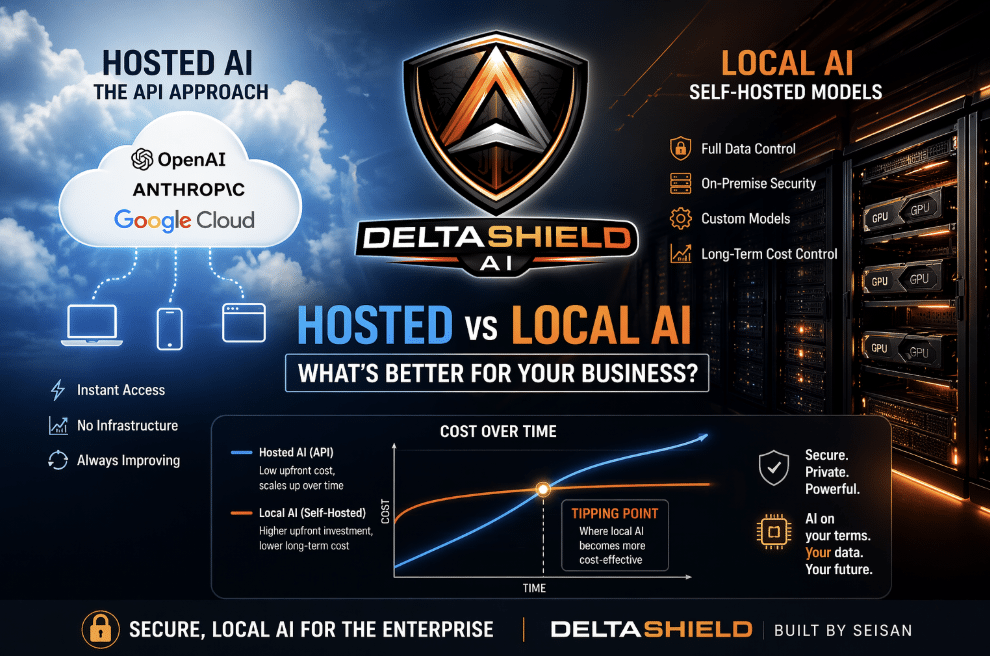

Hosted AI: The API Approach

Hosted AI is what most people mean when they talk about “using AI.” You send a request to a provider like OpenAI, Anthropic, or Google, and they return a response.

It’s simple, powerful, and incredibly accessible.

You don’t manage infrastructure. You don’t worry about model updates. You don’t need machine learning engineers on day one.

That’s why startups and even large enterprises begin here; it removes friction.

Hosted AI also evolves constantly. Providers continuously improve the models behind the scenes, so your application gets smarter over time without additional effort.

But that convenience comes at a cost, both literal and strategic.

Usage-based pricing scales fast, especially when AI becomes embedded in core workflows. And because your data is being sent externally, governance and compliance questions arise quickly.

Local AI: Self-Hosted Approach

Running AI locally sounds straightforward: “Just download a model and run it.”

In reality, it’s much more involved.

You need specialized hardware (typically GPUs) to handle inference at scale. You need to choose the right model, fine-tune it for your use case, and continuously monitor performance.

And then there’s maintenance.

Models need updates. Infrastructure needs optimization. Engineers need to troubleshoot performance issues and latency bottlenecks.

“Local” doesn’t mean simple. It means control.

The companies that succeed here treat AI like a core system, not a plug-in. They invest in infrastructure, talent, and long-term architecture.

At Seisan, we’re seeing this firsthand as we build Delta Shield AI, a secure, private AI platform designed specifically for organizations that need local control without the typical operational burden.

Cost Comparison

This is where things get interesting and often misunderstood.

At a small scale, hosted AI wins almost every time.

You might spend a few hundred to a few thousand dollars per month. That’s far cheaper than spinning up GPU infrastructure or hiring specialized engineers.

But as usage grows, costs compound.

At mid-scale, companies often hit a tipping point. API costs start climbing into tens of thousands per month, and finance teams begin asking hard questions.

At enterprise scale, the math flips.

A company processing millions of requests may find that a fixed infrastructure investment is more cost-effective than variable API pricing.

But here’s the catch: most companies underestimate hidden costs.

- Engineering time

- Infrastructure management

- Model optimization

- Downtime risk

These don’t show up on a simple pricing sheet.

For reference, cloud providers like Amazon Web Services offer pricing calculators that help estimate infrastructure costs.

In one real scenario, a company crossed the $30K/month threshold for API spend. Below that, hosting was cheaper. Above that, local infrastructure started making financial sense—but only after a 6-month buildout period.

That delay matters. Opportunity cost is real.

Security and Data Control Comparison

This is often the deciding factor.

If you’re in healthcare, finance, government, or working with proprietary data, where your data lives matters.

Hosted providers do implement strong security measures. Many offer data isolation, encryption, and enterprise controls.

But your data is still leaving your environment.

For some organizations, that’s a non-starter.

Local AI keeps everything inside your network. You control access, storage, and processing end to end.

Frameworks such as the National Institute of Standards and Technology AI Risk Management Framework outline best practices for securely managing AI.

This is exactly why we’re building Delta Shield AI: to give organizations a way to deploy AI locally while maintaining enterprise-grade security and governance.

Because in many cases, it’s not about preference, it’s about compliance.

Why Delta Shield AI Is a Smart Bet for Local AI

Most companies don’t actually want to manage AI infrastructure; they want secure, reliable, and cost-effective outcomes. That’s where Seisan’s solution, Delta Shield AI, stands out. It delivers the power of local AI without the typical complexity.

Security That Matches Reality

Security is the biggest driver behind local AI adoption.

With hosted solutions, your data leaves your environment, even if it’s encrypted or not retained. That creates real concerns around compliance, intellectual property, and customer data exposure.

Delta Shield AI keeps everything inside your environment:

- Behind your firewall

- Within your access controls

- Fully auditable

True Data Privacy

Even when vendors promise not to train on your data, it’s still being transmitted and processed externally.

Delta Shield AI eliminates that risk entirely.

Your data:

- Never leaves your infrastructure

- Is processed locally

- Follows your governance policies

Predictable, Controlled Costs

Hosted AI starts cheap, but scales quickly.

What begins as a few hundred dollars a month can turn into tens of thousands as usage grows. That unpredictability becomes a major issue at scale.

Delta Shield AI offers a different model:

- Higher upfront investment

- Stable, predictable, ongoing costs

- No runaway pricing tied to usage

Performance You Control

With hosted AI, you’re dependent on external systems for speed and uptime.

If there’s latency or downtime, you’re stuck waiting.

Delta Shield AI gives you control:

- Lower latency for real-time applications

- Reliable performance within your network

- Independence from third-party outages

Built for Business

Most local AI efforts fail because they’re treated like experiments.

Delta Shield AI is built as a production-ready platform, not a science project. That means:

- Structured access controls

- Audit logging

- Scalable architecture

- Integration with real business workflows

It turns AI from a technical capability into a business asset.

Make the Right AI Deployment Decision

Hosted or local isn’t a technology question. It’s a business question.

The right answer depends on your data sensitivity, usage scale, compliance requirements, and long-term strategy. Most companies get this wrong by following trends instead of evaluating their actual constraints.

At Seisan, we’ve built AI systems both ways. We know when hosted APIs make sense and when they become liabilities. We help companies make deployment decisions based on real requirements, not hype.

And for organizations that need local AI without the typical operational burden, we built Delta Shield AI. Secure, private, production-ready AI infrastructure that keeps your data inside your environment while eliminating the complexity of self-hosting.

Ready to figure out which approach actually works for your business? Contact us to discuss your AI deployment strategy.